Evolved artificial intelligence can play video games better than humans

Published on June 10, 2013 by Dr. Randal S. Olson

artificial intelligence Atari evolved AI high score Humies video games

2 min READ

Have you ever wondered if an AI could outplay you at Space Invaders? Wonder no more. Matthew Hausknecht and his colleagues from the University of Texas at Austin report that they have evolved an AI controller that beats the highest recorded scores of several Atari games.

In their report, Hausknecht et al. explain that they created the AI controller by training an artificial neural network -- a digital abstraction of how the human brain works -- to play 61 Atari games and achieve the highest score possible in all of them. They trained the artificial neural network using an evolutionary algorithm, that is, an algorithm that trains many artificial neural networks at once in a group by simulating evolution. The artificial neural networks that achieve the highest scores in that group are copied into a new group and tweaked slightly so they perform differently (for better or for worse!), then the process is repeated over and over until one of the artificial neural networks in the group can play all of the games well.

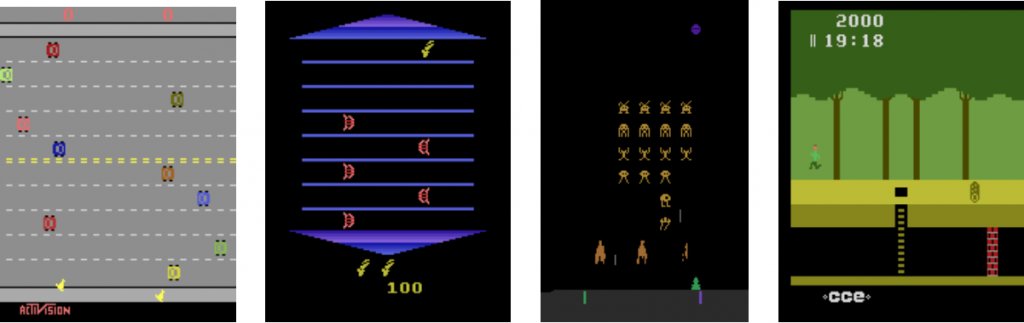

Freeway, Asterix, Space Invaders, and Pitfall were just a few of the 61 Atari games that the evolved AI controller learned to play. Pictures from Hausknecht et al.'s report.

After several days of virtual training, the AI controller had undergone a training montage equalling that of Rocky Balboa. While at first the best AI controller could barely even figure out how to play the games, it now stepped into the ring and took the highest scoring title for several of the Atari games. This performance is particularly impressive because it was accomplished by the same AI controller design, whereas most researchers usually have to customize their AI design for each game.

Videos of the record-breaking AI controller

The evolved AI scores 407,864 in Pinball, blowing the best human score of 56,851 out of the water.

In Bowling, the AI controller knocks down 252 pins, a whole 13 pins more than the best human score.

Even though the AI controller didn't beat the highest recorded scores for many of the games, it learned to play all 61 games well enough to achieve a respectable score. It even learned a few clever tactics, Hausknecht reports, such as "an exploitative return on Pong that the opponent can't keep up with."

The evolved AI controller (green paddle on right) learned to soundly defeat the hard-coded AI in Pong by using an exploitative return that the opponent cannot keep up with.

A rogue AI won't be taking over the world any time soon, but there's nothing protecting your high score in Asteroids.

See more videos at Hausknecht's web page.