The Claude Code leak in four charts: half a million lines, three accidents, forty tools

Part of Teaching an AI Agent to Make Beautiful Charts

Version 2.1.88 of @anthropic-ai/claude-code hit npm on March 31, 2026 with a production source map still attached. The Register reports security researcher Chaofan Shou spotted the exposure early Tuesday; the map pointed at a zip archive on Anthropic’s Cloudflare R2 storage, and decompressing it recovered the full TypeScript tree. Within hours the story was everywhere. Anthropic told reporters the same thing it tells everyone after these slips: packaging mistake, human error, no customer credentials in the bundle. Fair enough, but once the artifact was out, the internet did what the internet does.

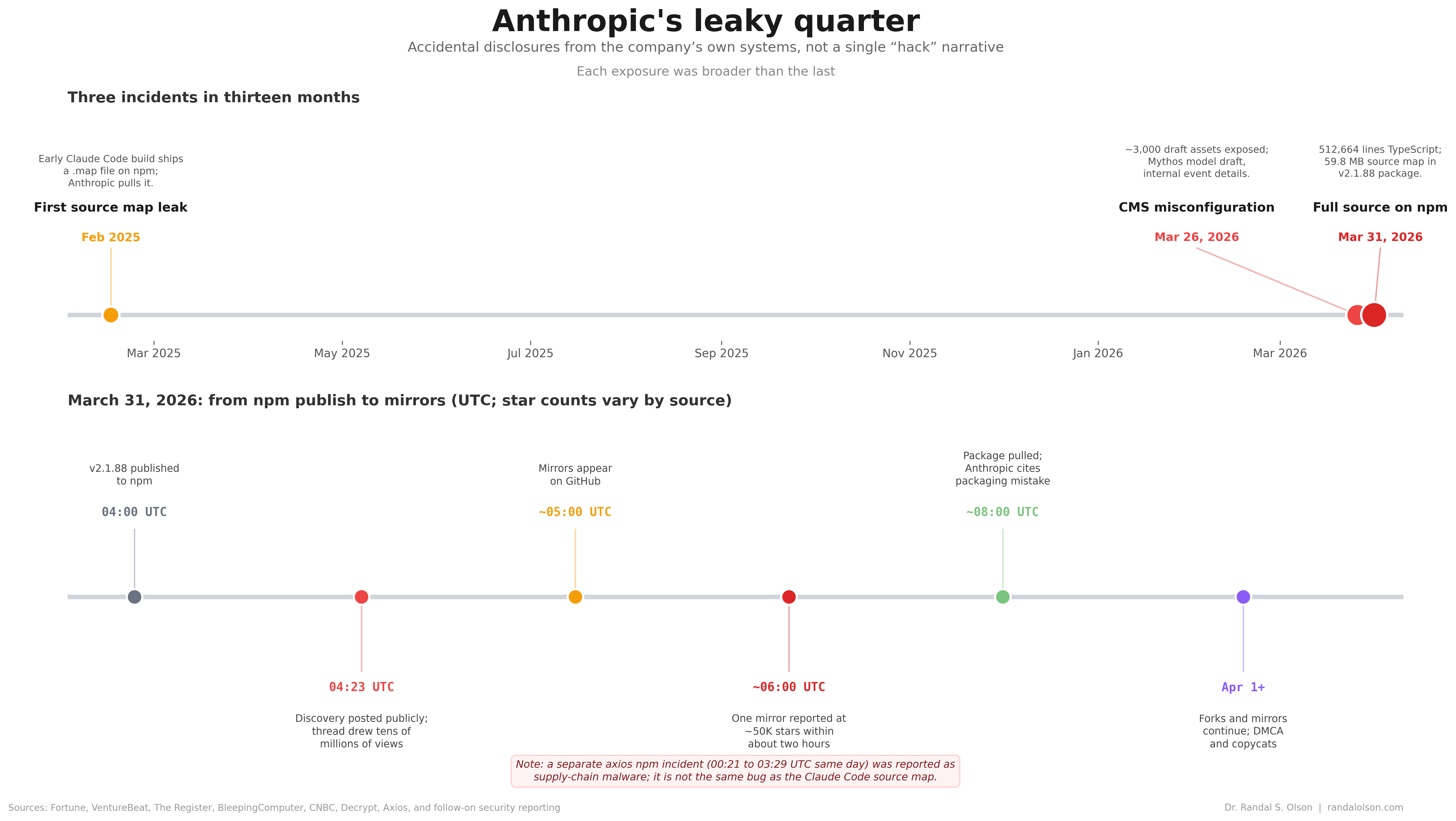

We have been here before. In February 2025, developer Dave Schumaker opened an early cli.mjs, spotted a fat inline sourceMappingURL, went to a vet appointment, and came back to find Anthropic had already yanked the string in a patch. He recovered the map from an undo buffer in Sublime Text. That is not a hack narrative. It is a release-engineering story that repeated on a bigger stage.

By 2026 the CLI was not a total black box anyway: The Register notes community reverse-engineering efforts and sites like CCLeaks had already been cataloging unreleased corners, so the zip mattered most as a fresh canonical snapshot to diff against, not as a debut.

I pulled one mirror of 2.1.88, counted 512,664 lines across 1,884 TypeScript and TSX files, and split the same snapshot four ways below. None of this is an official Anthropic drop. It is a frozen tarball people can agree on while the fork count climbs. Below: the directory tree, the leak timeline (including the dress rehearsal), the odd buddy/ corner, and the forty tool modules, plus a short closing section on how the charts were built.

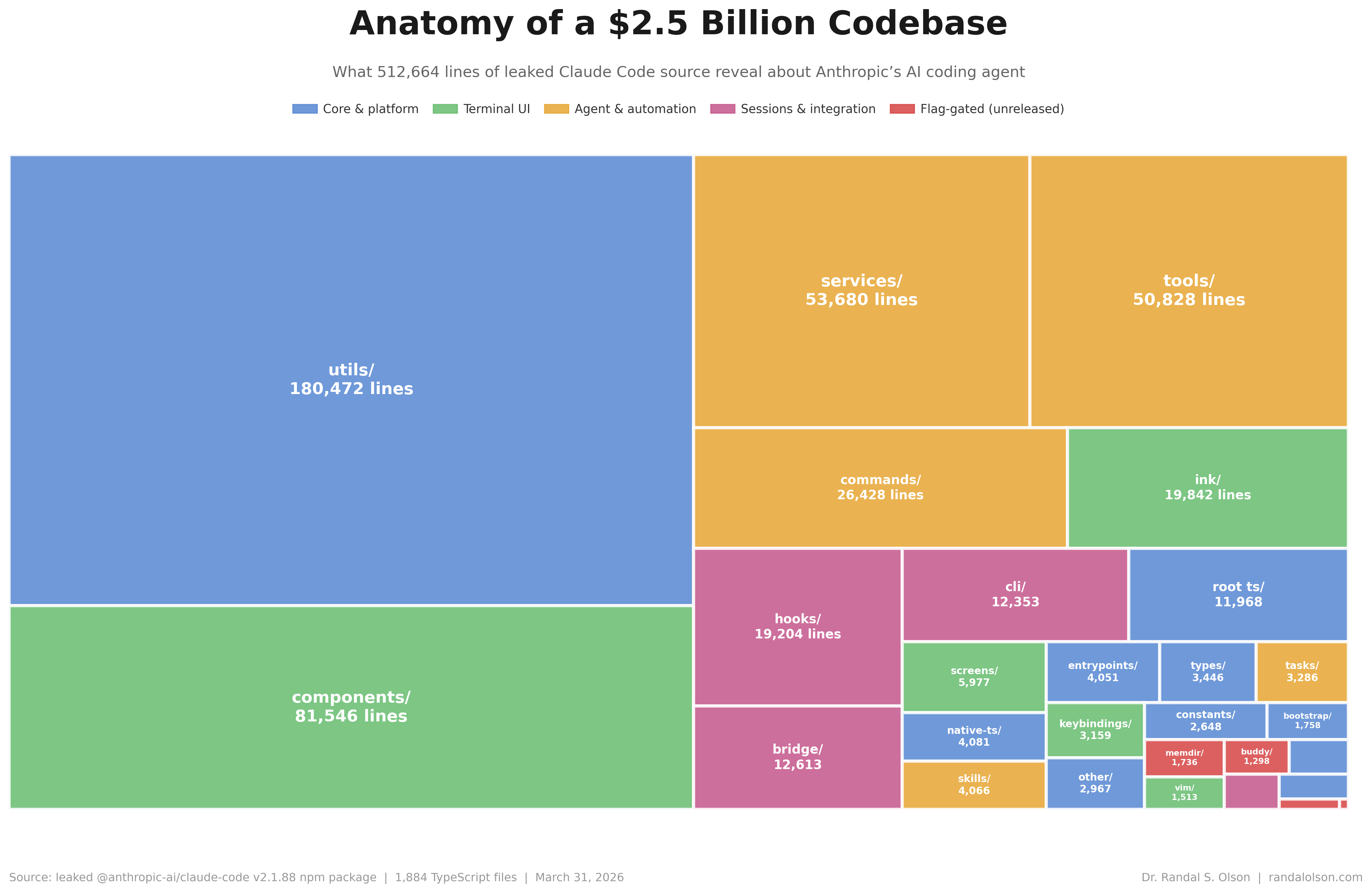

The money is in the plumbing, not the keynote slide

utils/ is roughly 180k lines, a little over a third of the tree. Add components/, services/, tools/, and commands/, and you have the shape of a serious desktop agent: React-style terminal UI (Ink shows up in writeups on the first leak), service glue, and a thick tool layer where the product actually touches the filesystem and shell. hooks/, bridge/, and cli/ pile on another ~44k lines together: session hooks, IDE bridge glue, and the entry wiring that turns “agent in your repo” from a slogan into integrations. The Register pegged the March drop at about 512k lines in ~1,900 files, same ballpark as this count.

The red wedge looms in screenshots, vanishes in the totals. In this mirror memdir/ edges out buddy/ on raw line count; only those two earn treemap labels among the flag-gated dirs, while coordinator/ and voice/ sit in the low thousands inside a half-million-line tree. Great fuel for rumor threads, useless for sizing what actually ships.

Three accidents, no villain, escalating stakes

February 2025 was the dress rehearsal. Schumaker’s post is worth reading for the small absurdities: eighteen million characters of base64 in one line, npm cache archaeology, then undo in an editor saving the day. Anthropic patched fast. The lesson did not stick.

Five days before the npm fire drill, the CMS leaked marketing drafts. Fortune reported that Anthropic acknowledged testing a new model after researchers found a misconfigured content store. InfoWorld walked through draft copy describing a phased rollout aimed first at security teams, plus the odd detail that internal naming still said “Capybara” in places. Different failure mode than npm, same theme: internal material treated as public by default.

March 31 put the CLI on front pages. The timeline’s lower panel is hour-scale UTC: publish, viral post, mirrors, takedown language. The Register reported one early GitHub mirror had already been forked more than 41,500 times; the same piece notes an uploader later repurposed his repository into a Python feature port over intellectual-property liability worries, while forks and mirrors kept spreading. InfoWorld quoted Tanya Janca on why this hurts more than a random npm typo: high-value IP means attackers can skip slow reverse engineering and hunt logic bugs in plain text. That is the real cost story behind the fork-and-mirror scramble.

After the architecture story and that incident arc, the same mirror still has room for something that does not match the press release voice at all.

Enterprise SKU, gacha mechanics

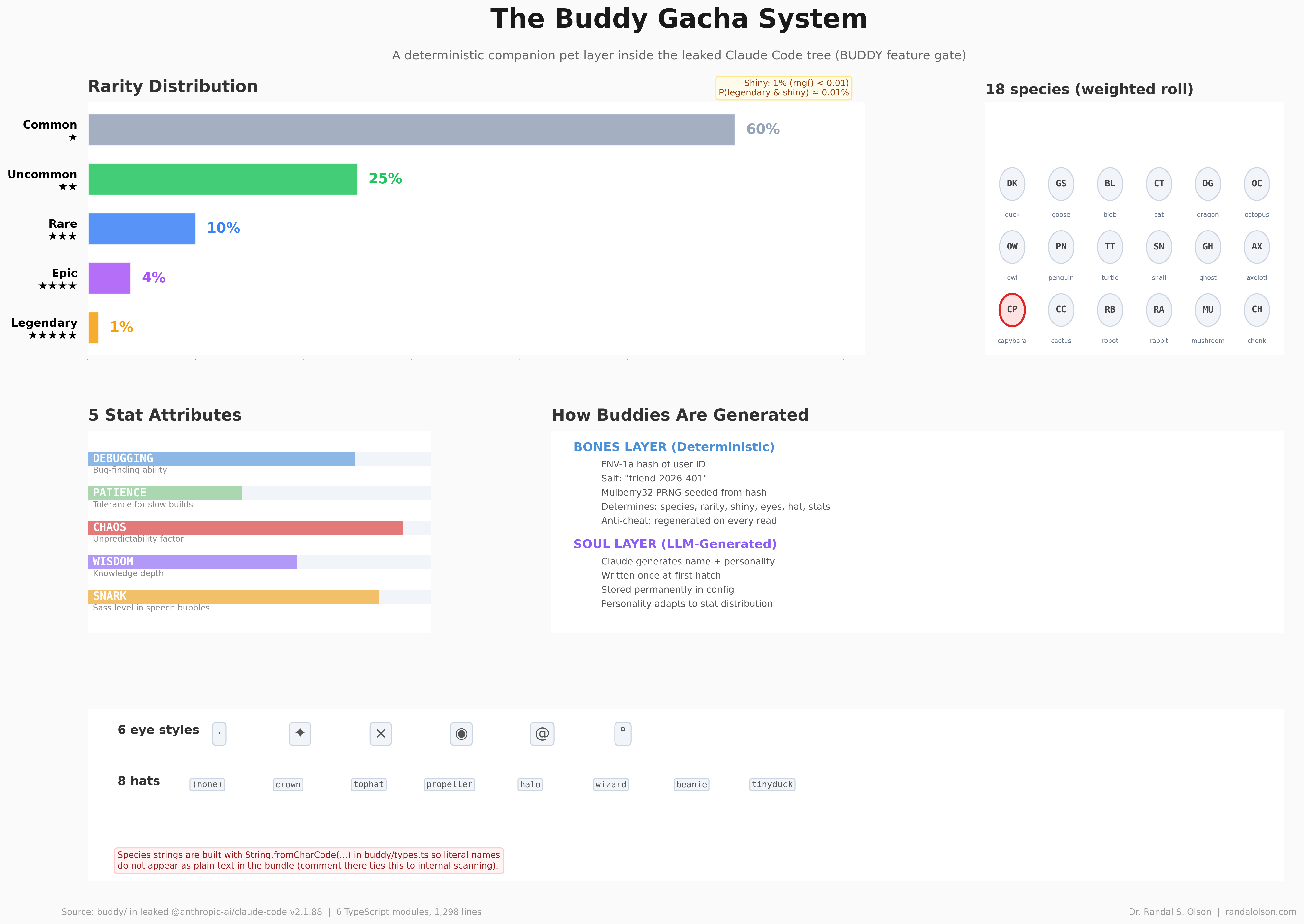

The buddy/ subtree is a weighted collectible game: eighteen species, five rarity tiers, hats, eye glyphs, stats named like inside jokes. The chart mirrors the probabilities baked into buddy/types.ts in the mirror (60 / 25 / 10 / 4 / 1). It reads like a side project smuggled into a repo that otherwise worries about JWT bridges and task orchestration.

Compile-time gates mean this may never ship as-is, or may ship under another flag. The point is not to predict the roadmap. The point is that the leak lets everyone price the gap between press-release Claude and the culture encoded in the tree.

Forty tools, one attack surface narrative

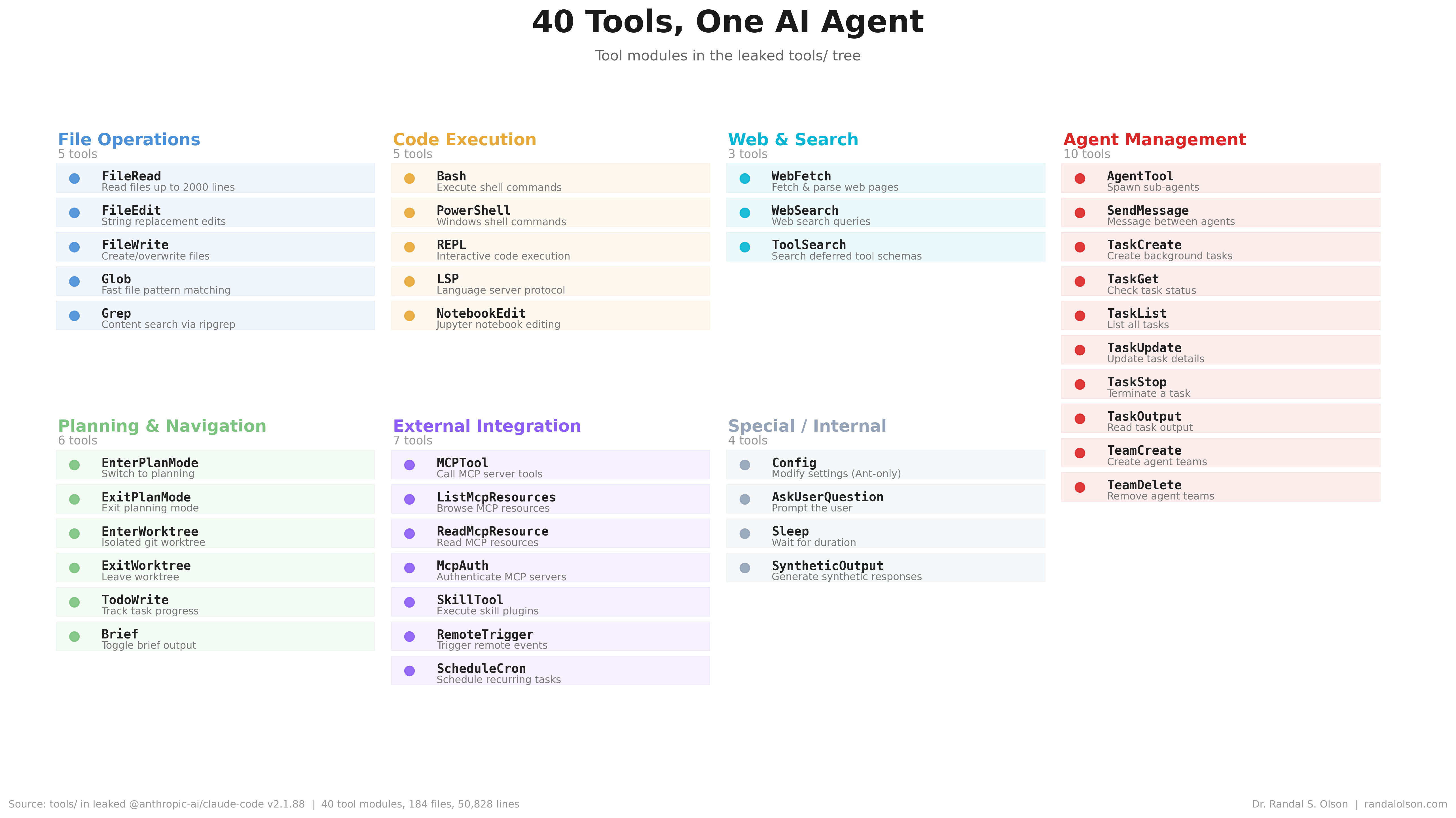

tools/ is about 50.8k lines in 184 files and forty concrete modules. The chart groups them the way the source does: file IO, shells and REPL, web fetch and search, multi-agent task plumbing, plan and worktree modes, MCP and skills hooks, and the small Special / Internal slice (config, user prompts, sleep, synthetic output).

If you care how an agent can move laterally on a machine, this panel is the table of contents. If you care how regulators think about “what the model can do,” it is the same list, just with line counts attached.

How this chart was made

An AI agent produced these four matplotlib figures as part of the Beautiful Charts with AI series. Each view was refined against the Tufte Test, a data visualization quality standard built by Goodeye Labs on Truesight. The treemap uses squarify; the timeline uses proportional dates on the top axis and a normalized hour strip below; the buddy and tools panels are layout code driven by tables in the mirrored tree.

Data source: aggregated counts from a community mirror of @anthropic-ai/claude-code@2.1.88 (npm publication March 31, 2026). The directory totals table is available here; the tool module listing is available here.

Beautiful Charts with AI

Want to test your own charts against the same quality bar?

Try the Tufte Test on your own chart, or get future updates on AI evaluation and chart quality from Goodeye Labs.

Dr. Randal S. Olson

AI Researcher & Builder · Co-Founder & CTO at Goodeye Labs

I turn ambitious AI ideas into business wins, bridging the gap between technical promise and real-world impact.